2026-02-27 カリフォルニア大学サンディエゴ校(UCSD)

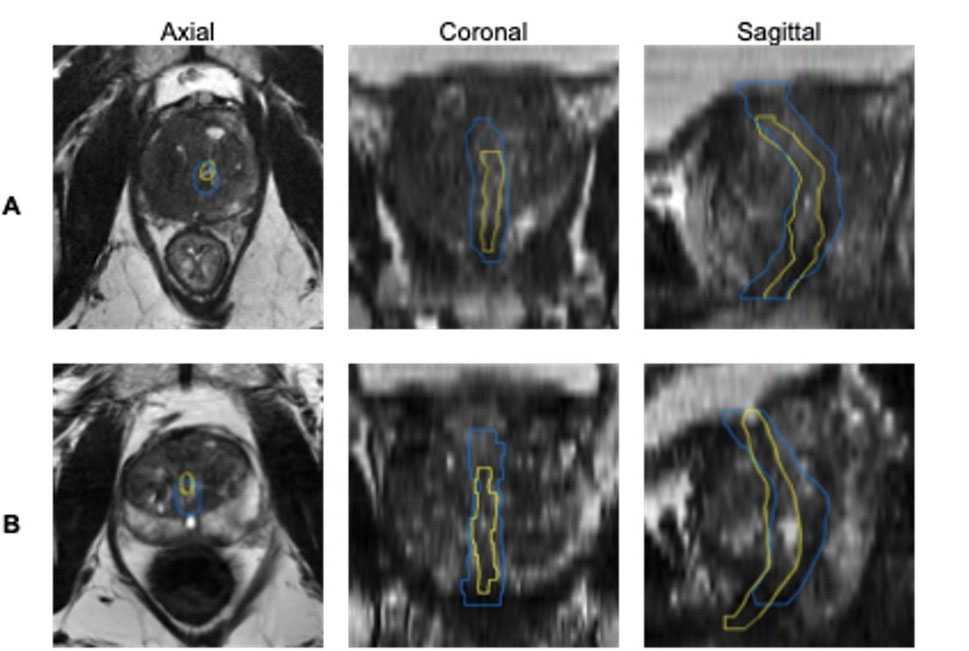

Side‑by‑side MRI images show how an AI system outlines the urethra compared with outlines drawn by a team of medical specialists. In each view (axial, coronal and sagittal), the yellow line shows the experts’ outline and the blue line shows the AI’s outline. Panel A shows one example where the AI only partly matches the expert outline, while panel B shows another example where the AI comes closer to the expert outline, especially along the edges. Credit: Yuze Song, electrical engineering Ph.D. student at UC San Diego

<関連情報>

- https://today.ucsd.edu/story/san-diego-supercomputer-center-powers-ai-model-to-improve-prostate-cancer-care

- https://www.sciencedirect.com/science/article/pii/S0167814025052351

MRIによる尿道の輪郭:多分野にわたるコンセンサスに基づく教育用アトラスと人工知能ベンチマークの参照標準 Urethra contours on MRI: Multidisciplinary consensus educational atlas and reference standard for artificial intelligence benchmarking

Yuze Song, Lily Nguyen, Anna M. Dornisch, Madison T. Baxter, Tristan Barrett, Anders M. Dale, Robert T. Dess Mukesh Harisinghani, Sophia C. Kamran, Michael A. Liss, Daniel J.A. Margolis, Eric P. Weinberg, Sean A. Woolen, Tyler M. Seibert

Radiotherapy & Oncology Available online: 25 October 2025

DOI:https://doi.org/10.1016/j.radonc.2025.111231

Highlights

- Expert panel consensus reference dataset of urethra contours on prostate MRI.

- Deep-learning model matched or outperformed subspecialists in urethra segmentation.

- AI tool achieved better urethra coverage than physicians (81% vs 36%).

- Strong generalizability suggests readiness for prostate cancer RT planning use.

Abstract

Introduction

The urethra is a recommended avoidance structure for prostate cancer treatment. However, even subspecialist physicians often struggle to accurately identify it on available imaging. Automated segmentation tools show promise, but a lack of reliable ground truth or appropriate evaluation standards has hindered validation and clinical adoption. This study aims to establish a reference-standard dataset with expert consensus contours, define clinically meaningful evaluation metrics, and assess the performance and generalizability of a deep-learning-based segmentation model.

Materials and Methods

A multidisciplinary panel of four experienced subspecialists in prostate MRI generated consensus urethra contours on MRI data for 71 patients from 6 centers, establishing a reference standard. Four of these patients were previously used in an international study (PURE-MRI) where 62 physicians contoured the prostate and urethra. Using an independent training dataset (n = 151 patients, 1 center), we developed a deep-learning AI model for urethra segmentation. We evaluated the AI tool in the consensus reference dataset and compared it to human performance using Dice, percent urethra coverage, and maximum 2D (axial, in-plane) Hausdorff Distance (HD) from the reference standard.

Results

The AI model outperformed most physicians, achieving median Dice of 0.41 (vs. 0.33 for physicians), Coverage of 81 % (vs. 36 %), and Max 2D HD of 1.8 mm (vs. 1.6 mm) in the four PURE-MRI cases. In the full reference dataset, AI performance remained consistent, with Dice of 0.40, Coverage of 89 %, and Max 2D HD of 2.0 mm, indicating strong generalizability across a broader patient population and more varied imaging conditions.

Conclusion

We established a multidisciplinary consensus benchmark for segmentation of the urethra. The deep-learning model performs comparably to specialist physicians and demonstrates consistent results across multiple institutions. It shows promise as a clinical decision-support tool for accurate and reliable urethra segmentation in prostate cancer radiotherapy planning and studies of dose-toxicity associations.