2026-05-11 コロンビア大学

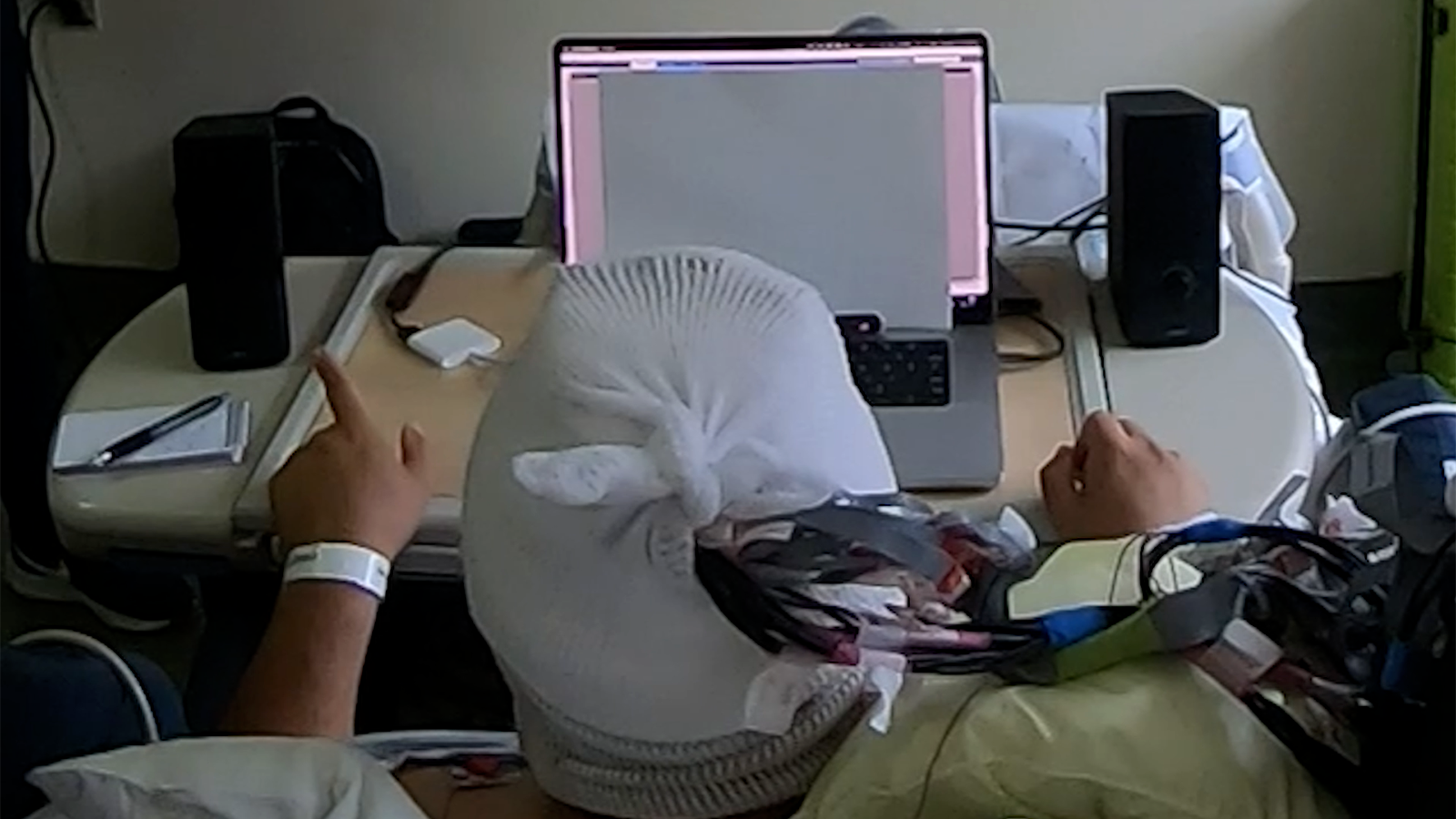

A hearing system monitoring this man’s brain activity amplifies a conversation played on his left while quieting one on his right, based on which conversation his brainwaves suggest he is paying attention to. (Vishal Choudhari / Mesgarani lab / Columbia’s Zuckerman Institute)

<関連情報>

- https://zuckermaninstitute.columbia.edu/brain-controlled-hearing-system-proves-itself-first-human-studies

- https://www.nature.com/articles/s41593-026-02281-5

- https://www.nature.com/articles/nature11020

リアルタイムの脳制御による選択的聴覚は、複数話者環境における音声知覚を向上させる Real-time brain-controlled selective hearing enhances speech perception in multi-talker environments

Vishal Choudhari,Maximilian Nentwich,Sarah Johnson,Jose L. Herrero,Stephan Bickel,Ashesh D. Mehta,Daniel Friedman,Adeen Flinker,Edward F. Chang & Nima Mesgarani

Nature Neuroscience Published:11 May 2026

DOI:https://doi.org/10.1038/s41593-026-02281-5

Abstract

Understanding speech in noisy environments is difficult for many people, and current hearing aids often fail because they amplify all sounds rather than the talker of interest. Auditory attention decoding (AAD) offers a potential solution by using the listener’s brain signals to identify and enhance the attended speaker, but it has been unclear whether this can provide real-time perceptual benefits. Here we used high-resolution intracranial electroencephalography in patients undergoing neurosurgical procedures to implement a closed-loop system that achieves the decoding fidelity necessary to dynamically amplify the attended talker. Across multiple experiments, the system improved speech intelligibility, reduced listening effort and was consistently preferred by subjects. It also tracked both instructed and self-initiated attention shifts. By providing direct evidence that a real-time, brain-controlled hearing system can enhance perception, this work establishes a key performance benchmark for future auditory brain–computer interfaces and advances AAD from a theoretical concept to a validated solution for personalized assistive hearing.

複数話者音声知覚における注意を向けた話者の選択的皮質表現 Selective cortical representation of attended speaker in multi-talker speech perception

Nima Mesgarani & Edward F. Chang

Nature Published:18 April 2012

DOI:https://doi.org/10.1038/nature11020

Abstract

Humans possess a remarkable ability to attend to a single speaker’s voice in a multi-talker background1,2,3. How the auditory system manages to extract intelligible speech under such acoustically complex and adverse listening conditions is not known, and, indeed, it is not clear how attended speech is internally represented4,5. Here, using multi-electrode surface recordings from the cortex of subjects engaged in a listening task with two simultaneous speakers, we demonstrate that population responses in non-primary human auditory cortex encode critical features of attended speech: speech spectrograms reconstructed based on cortical responses to the mixture of speakers reveal the salient spectral and temporal features of the attended speaker, as if subjects were listening to that speaker alone. A simple classifier trained solely on examples of single speakers can decode both attended words and speaker identity. We find that task performance is well predicted by a rapid increase in attention-modulated neural selectivity across both single-electrode and population-level cortical responses. These findings demonstrate that the cortical representation of speech does not merely reflect the external acoustic environment, but instead gives rise to the perceptual aspects relevant for the listener’s intended goal.