2026-04-09 カリフォルニア工科大学(Caltech)

<関連情報>

- https://www.caltech.edu/about/news/imagine-that-brain-uses-neurons-from-vision-system-when-forming-mental-imagery

- https://www.science.org/doi/10.1126/science.adt8343

- https://www.nature.com/articles/s41586-020-2350-5

- https://www.cell.com/cell/fulltext/S0092-8674(17)30538-X

ヒト腹側側頭皮質における物体の知覚と想像のための共通コード A shared code for perceiving and imagining objects in human ventral temporal cortex

V. S. Wadia, C. M. Reed, J. M. Chung, L. M. Bateman, […] , and D. Y. Tsao

Science Published:9 Apr 2026

DOI:https://doi.org/10.1126/science.adt8343

Editor’s summary

The ventral temporal cortex (VTC) is a brain area involved in identifying and categorizing visual stimuli. Wadia et al. performed single-neuron recording in the VTC of patients with epilepsy while the subjects were presented with real visual stimuli or were asked to imagine them. Deep network analysis showed that visually responsive neurons were tuned on specific axes. While imagining the objects, around 40% of the visually responsive VTC neurons were also robustly activated. Thus, mental imagery reactivates the same sensory codes used during visual stimuli, suggesting the existence of a generative model capable of synthesizing detailed sensory contents from an abstract, semantic representation. —Mattia Maroso

Structured Abstract

INTRODUCTION

Mental imagery refers to our brains’ capacity to generate percepts, emotions, and thoughts in the absence of external stimuli. This ability allows us to generate art, simulate actions and outcomes, remember previous experiences, and imagine new ones. Uncontrolled mental imagery can contribute to psychological disorders, including anxiety, schizophrenia, and posttraumatic stress disorder. Despite its importance in our lives, little is known about the single-neuron mechanisms of mental imagery. Neuroimaging results support a long-standing theory that imagery of a given sense is subserved by the reactivation of that specific sensory cortex. However, these studies lack the resolution to discern whether it is the same neurons or separate circuitry roughly located in the same regions that reactivates.

RATIONALE

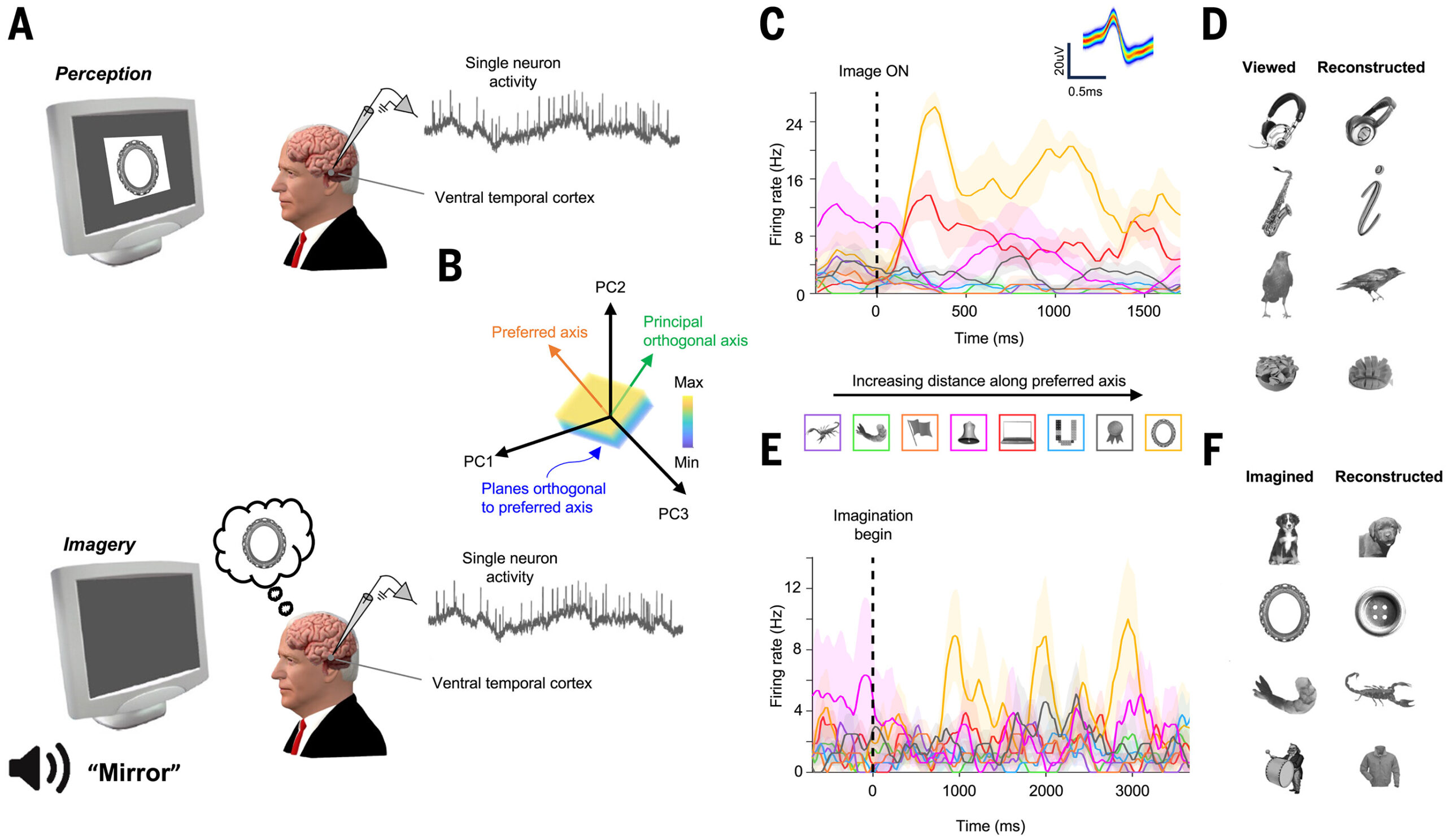

We investigated the single-neuron mechanisms of visual imagery by recording single neurons in human patients implanted with electrodes to localize their focal epilepsy as they viewed and subsequently imagined objects. We focused our investigations on the ventral temporal cortex (VTC), a part of the temporal lobe dedicated to representing visual objects. We first determined the code for visual objects. We found that as in macaques, neurons in human VTC represent objects by using a distributed axis code. This code emphasizes the geometric picture that neurons project incoming stimuli—formatted as points in feature space—onto specific preferred axes and respond proportionally to the projection value. We then examined whether this code is reactivated during imagery.

RESULTS

We recorded 714 neurons in the human VTC across 16 patients as they viewed visual objects. A majority of neurons (456 of 714) were visually selective for one of the five object categories used (faces, plants, text, animals, and objects). To represent general objects with arbitrary features, we built a low-dimensional object space using the unit activations of deep networks trained to perform object classification. Nearly ~80% (367 of 456) of all visually responsive single neurons were significantly axis tuned. We used this axis code to reconstruct objects and generate maximally effective synthetic stimuli. Last, we recorded the responses of the same neurons in a subset of patients (6 of 16) as they imagined the same objects. Mean responses to perceived and imagined objects were comparable, with some neurons active only during perception, some only during imagery, and some during both. In particular, ~40% (43 of 107) of axis-tuned VTC neurons recorded during the imagery task reactivated, and the responses during imagery of individual neurons were proportional to the projection value of those objects onto the neurons’ viewing axes. We used this observation to reconstruct imagined objects while still easily distinguishing whether those objects were viewed or imagined.

CONCLUSION

We leveraged the opportunity to record from the same population of VTC neurons in humans as they viewed and imagined objects. Neurons use an axis code to represent visual objects, and neural activity during imagination reactivates this code. These findings provide single-neuron evidence for a generative model in the human brain.

A shared code for vision and imagination.

(A) Single-neuron recordings from VTC during vision and imagery. (B) Axis model framework for stimulus encoding. (C) VTC neuron showing maximal responses to images furthest along preferred axis. (D) Axis code enables stimulus reconstruction. (E) Same neuron as (C) reactivated during imagery. Stimulus preference is preserved. (F) Reactivation of axis code enables reconstruction of imagined stimuli.

Abstract

Mental imagery allows us to remember previous experiences and imagine new ones. Animal studies have yielded rich insight into mechanisms for visual perception, but the neural mechanisms for visual imagery remain poorly understood. We determined that approximately 80% of visually responsive single neurons in the human ventral temporal cortex (VTC) use a distributed axis code to represent objects. We used that code to reconstruct objects and generate maximally effective synthetic stimuli. We then recorded responses from the same neural population while subjects imagined specific objects; about 40% of axis-tuned VTC neurons recapitulated the visual code. Our findings reveal that visual imagery is supported by reactivation of the same neurons involved in perception, providing single-neuron evidence for the existence of a generative model in human VTC.

霊長類の側頭葉下部皮質における物体空間の地図 A map of object space in primate inferotemporal cortex

Pinglei Bao,Liang She,Mason McGill & Doris Y. Tsao

Nature Published:03 June 2020

DOI:https://doi.org/10.1038/s41586-020-2350-5

Abstract

The inferotemporal (IT) cortex is responsible for object recognition, but it is unclear how the representation of visual objects is organized in this part of the brain. Areas that are selective for categories such as faces, bodies, and scenes have been found1,2,3,4,5, but large parts of IT cortex lack any known specialization, raising the question of what general principle governs IT organization. Here we used functional MRI, microstimulation, electrophysiology, and deep networks to investigate the organization of macaque IT cortex. We built a low-dimensional object space to describe general objects using a feedforward deep neural network trained on object classification6. Responses of IT cells to a large set of objects revealed that single IT cells project incoming objects onto specific axes of this space. Anatomically, cells were clustered into four networks according to the first two components of their preferred axes, forming a map of object space. This map was repeated across three hierarchical stages of increasing view invariance, and cells that comprised these maps collectively harboured sufficient coding capacity to approximately reconstruct objects. These results provide a unified picture of IT organization in which category-selective regions are part of a coarse map of object space whose dimensions can be extracted from a deep network.

霊長類の脳における顔認識のコード The Code for Facial Identity in the Primate Brain

Le Chang ∙ Doris Y. Tsao

Cell Published: June 1, 2017

DOI:https://doi.org/10.1016/j.cell.2017.05.011

Highlights

- Facial images can be linearly reconstructed using responses of ∼200 face cells

- Face cells display flat tuning along dimensions orthogonal to the axis being coded

- The axis model is more efficient, robust, and flexible than the exemplar model

- Face patches ML/MF and AM carry complementary information about faces

Summary

Primates recognize complex objects such as faces with remarkable speed and reliability. Here, we reveal the brain’s code for facial identity. Experiments in macaques demonstrate an extraordinarily simple transformation between faces and responses of cells in face patches. By formatting faces as points in a high-dimensional linear space, we discovered that each face cell’s firing rate is proportional to the projection of an incoming face stimulus onto a single axis in this space, allowing a face cell ensemble to encode the location of any face in the space. Using this code, we could precisely decode faces from neural population responses and predict neural firing rates to faces. Furthermore, this code disavows the long-standing assumption that face cells encode specific facial identities, confirmed by engineering faces with drastically different appearance that elicited identical responses in single face cells. Our work suggests that other objects could be encoded by analogous metric coordinate systems.